| Event | Date | Subject |

|---|---|---|

| Lecture 1 | 21-04 | Introduction to Data and Data Science |

| Lecture 2 | 28-04 | Getting Data: API's and Databases |

| Lecture 3 | 07-05 | Getting Data: Web Scraping |

| Lecture 4 | 26-05 | Text as Data |

| Lecture 5 | 27-05 | Introduction to LLMs |

| Lecture 6 | 09-06 | Prompt Engineering and Structured Data |

| Lecture 7 | 16-06 | Spatial Data and Geocomputation |

Introduction to Applied Data Science

Lecture 7: Spatial Data

Bas Machielsen

Overview

Course Schedule

Outline Today

- First part: introduction to spatial data – vector data and raster data.

- Second part: geocomputation using vector data.

Introduction to Spatial Data

What is Spatial Data?

- Economics increasingly recognizes that location matters.

- Housing prices, regional development, trade patterns, labor markets all have spatial dimensions.

- Traditional regression analysis often ignores spatial relationships.

- Spatial data allows us to ask: Where do economic phenomena occur and why?

- Spatial data is data includes geographic or location information.

- Two main types:

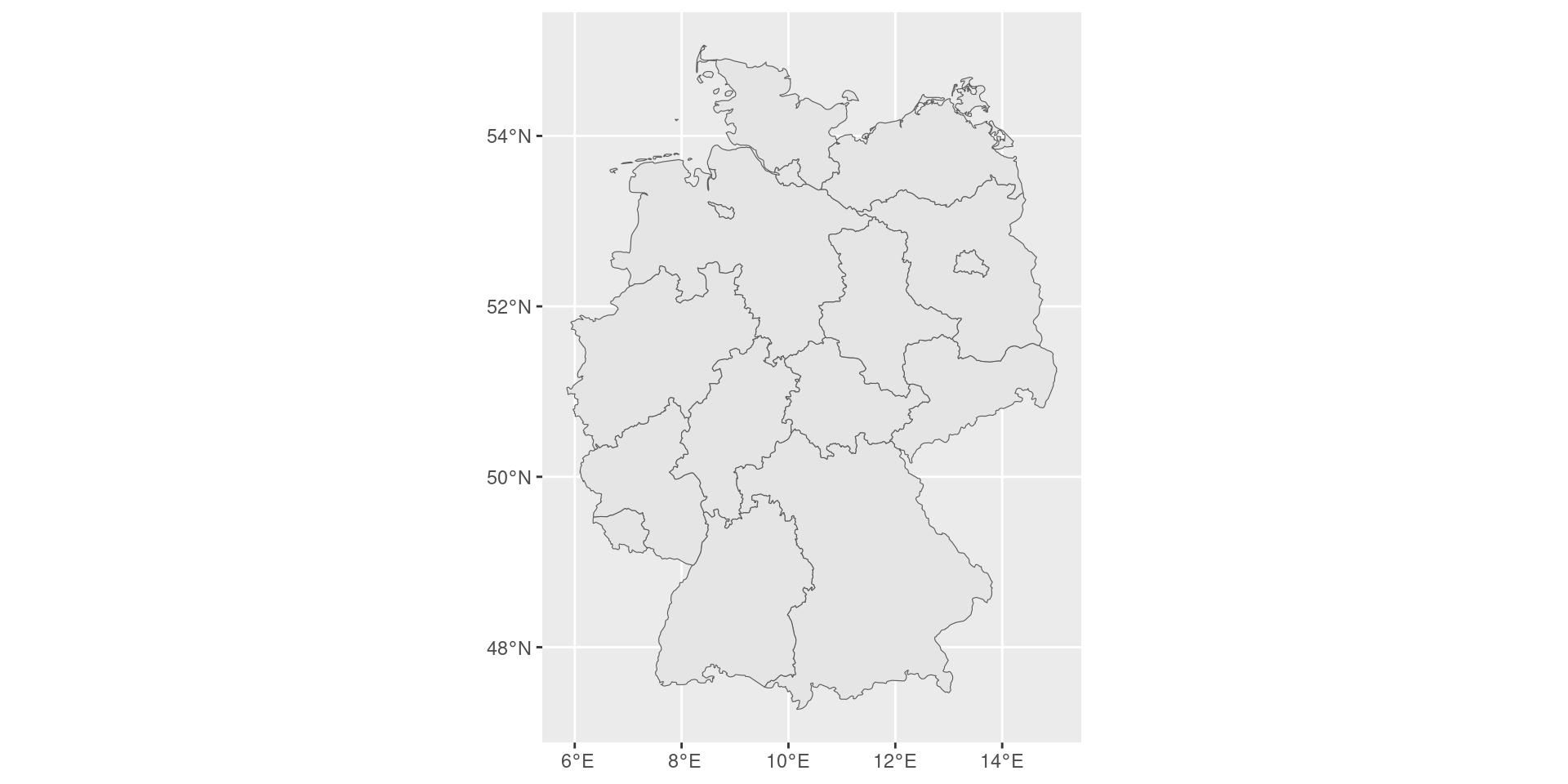

- Vector data: Points, lines, and polygons (e.g., cities, roads, country borders).

- Raster data: Grid-based continuous surfaces (e.g., temperature maps, elevation, population density).

- Examples in economics: census tracts, store locations, satellite imagery of nighttime lights (economic activity proxy).

Vector vs. Raster Data

- Vector data: represents things with points, lines and polygons.

- Vector data can scale and stretch and transform those easily with mathematical operations.

- Can increase precision to arbitrary levels (can always zoom in futher).

- It allows allows us to ask questions about statial relations, such as what is the area of an object, what is the distance from one object to another, or which objects border other objects.

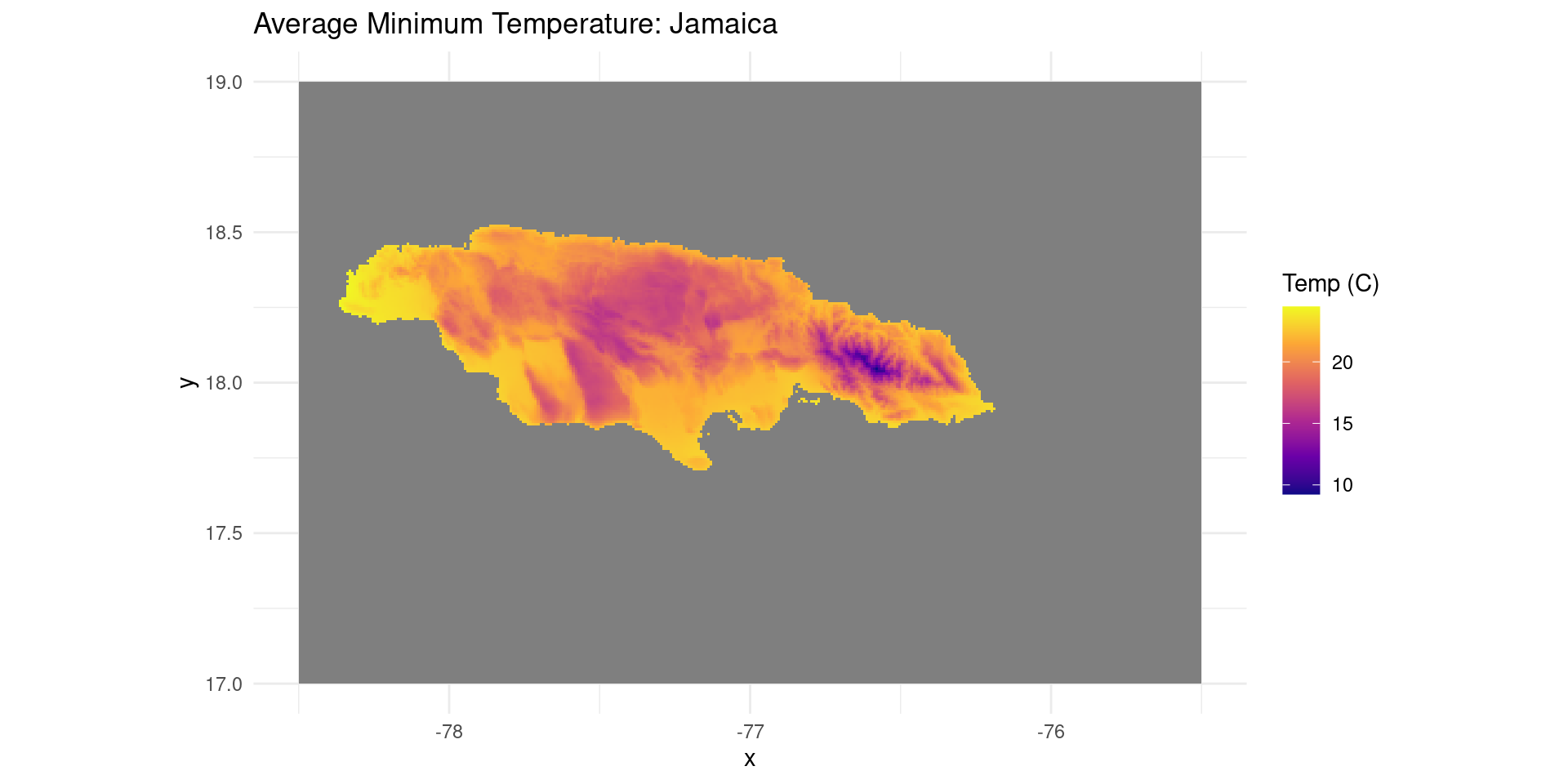

- Raster data: fixed-size tiles or cells (like a mosaic, or like pixels), which form a grid with a fixed resolution.

- Raster data is like an image with geo-coded pixels.

- Satellite images, for example, are usually released in the form of raster data.

- Other examples of raster data include population density images, species occurence data, and meteorological data.

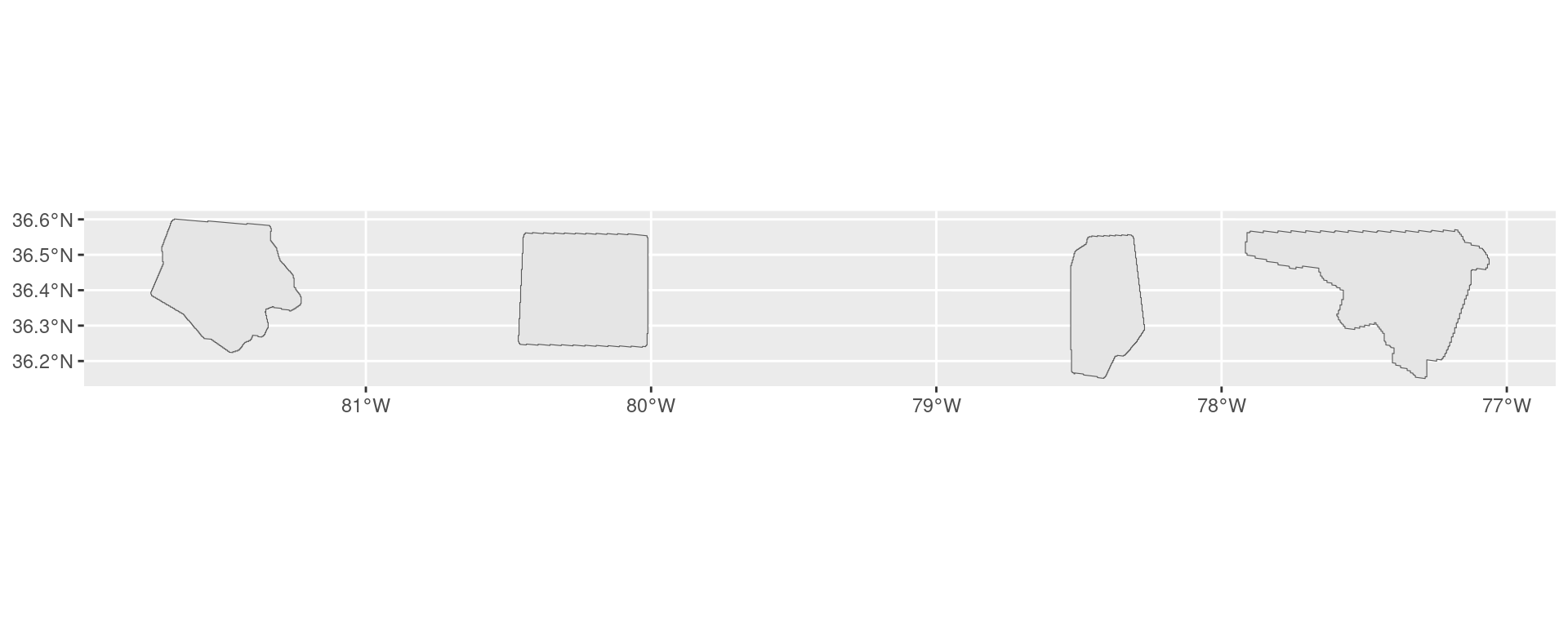

Example Vector Data

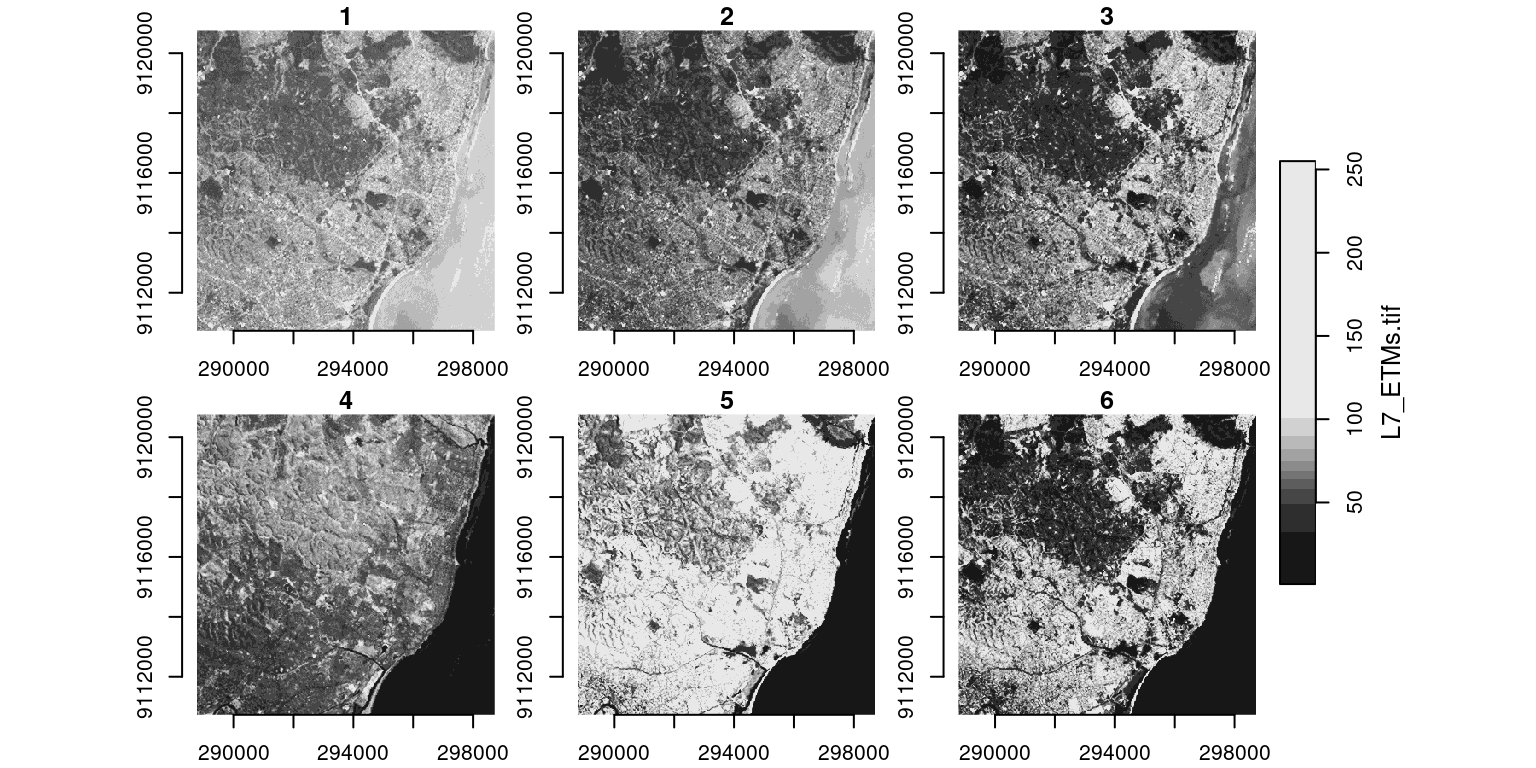

Example Raster Data

R for Spatial Analysis

- Open-source and reproducible research

- Rich ecosystem of spatial packages

- Seamless integration with econometric tools

- Active community and extensive documentation

- Two key packages we’ll focus on:

sf: Simple Features for vector datastars: Spatiotemporal Arrays for raster data

The sf Package: Simple Features

- Modern replacement for older spatial packages (

sp, rgdal). - Integrates smoothly with the

tidyverseset of libraries. - Treats spatial data as data frames with a special geometry column.

- Follows international standards (Open Geospatial Consortium).

- Makes spatial operations feel like regular data manipulation.

Basic sf Operations

- Reading spatial data:

st_read() - Creating spatial objects:

st_as_sf() - Coordinate Reference Systems:

st_crs(),st_transform() - Spatial predicates:

st_intersects(),st_within(),st_distance() - Geometric operations:

st_buffer(),st_union(),st_intersection()

Vector Data Types in Economics

- Points: Store locations, bank branches, firm headquarters

- Lines: Transportation networks, supply chains, pipelines

- Polygons: Administrative boundaries, market areas, zoning districts

- Each type suited for different economic questions

The stars Package: Spatiotemporal Arrays

- Designed for raster data structures

- Handles multidimensional arrays efficiently

- Perfect for satellite imagery, climate data, gridded population

- Can work with temporal dimensions (time series of spatial data)

- Complements

sffor comprehensive spatial analysis

Raster Data in Economics

- Continuous spatial phenomena across a grid

- Applications include:

- Night-time lights as GDP proxy

- Land cover and agricultural productivity

- Pollution and environmental economics

- Temperature and climate impact studies

Coordinate Reference Systems (CRS)

- Critical concept: How we represent 3D Earth on 2D maps.

- Two main types:

- Geographic CRS: Latitude and longitude (unprojected)

- Projected CRS: Flat map with distance in meters/feet

- Must match CRS when combining datasets

- Use

st_transform()to convert between systems

Vector Data

Vector Data as Simple Features

- In R, a Simple Feature is a standard way to describe how real-world objects are stored in computers.

- It consists of:

- Geometry: the shape (Point, Line, Polygon).

- Attributes: Data associated with the shape (GDP, Population, Name).

- In the

sf, the geometry is just a special column (usually named geometry) in a data frame.

Loading Data

- We will use the North Carolina SIDS dataset, included with the

sfpackage. - It contains map data of 100 counties in North Carolina (USA).

Reading layer `nc' from data source

`/home/bas/R/x86_64-pc-linux-gnu-library/4.5/sf/shape/nc.shp'

using driver `ESRI Shapefile'

Simple feature collection with 100 features and 14 fields

Geometry type: MULTIPOLYGON

Dimension: XY

Bounding box: xmin: -84.32385 ymin: 33.88199 xmax: -75.45698 ymax: 36.58965

Geodetic CRS: NAD27[1] "sf" "data.frame"Object Structure

- This dataset contains features, corresponding to each of these counties

- Each of these counties is represented as a polygon and is geocoded

- The bounding box of

ncis represented by coordinates in a certain CRS.

Simple feature collection with 3 features and 14 fields

Geometry type: MULTIPOLYGON

Dimension: XY

Bounding box: xmin: -81.74107 ymin: 36.23388 xmax: -80.43531 ymax: 36.58965

Geodetic CRS: NAD27

AREA PERIMETER CNTY_ CNTY_ID NAME FIPS FIPSNO CRESS_ID BIR74 SID74

1 0.114 1.442 1825 1825 Ashe 37009 37009 5 1091 1

2 0.061 1.231 1827 1827 Alleghany 37005 37005 3 487 0

3 0.143 1.630 1828 1828 Surry 37171 37171 86 3188 5

NWBIR74 BIR79 SID79 NWBIR79 geometry

1 10 1364 0 19 MULTIPOLYGON (((-81.47276 3...

2 10 542 3 12 MULTIPOLYGON (((-81.23989 3...

3 208 3616 6 260 MULTIPOLYGON (((-80.45634 3...Inspecting and Setting CRS

- You cannot analyze data if layers have different CRSs.

- Use

st_crs()to check andst_transform()to change.

EPSG Codes

- To easily identify a CRS, we use EPSG codes.

- Common codes in Economics:

- EPSG:4326: WGS 84 (Standard Lat/Lon used by GPS).

- EPSG:3857: Web Mercator (Used by Google Maps).

- EPSG:326xx: UTM zones (Good for local distance calculations in meters).

Reading and Writing Data

- Economics data comes in many formats: Shapefiles (.shp), GeoJSON, GPKG, KML.

sfhandles almost all of them withst_readandst_write.

Working With Vector Data

- You can work with vector data using the

dplyrlibrary - Select several columns:

Simple feature collection with 4 features and 3 fields

Geometry type: MULTIPOLYGON

Dimension: XY

Bounding box: xmin: 5.00448 ymin: 51.73483 xmax: 7.198506 ymax: 53.55809

Geodetic CRS: WGS 84

name latitude longitude geometry

408 Groningen 53.2790 6.73067 MULTIPOLYGON (((7.194591 53...

410 Drenthe 52.9046 6.60064 MULTIPOLYGON (((7.072151 52...

411 Overijssel 52.4311 6.41649 MULTIPOLYGON (((6.719083 52...

413 Gelderland 52.0635 5.96001 MULTIPOLYGON (((6.771576 52...Working With Vector Data: Filter

- Data can be filtered:

Simple feature collection with 1 feature and 3 fields

Geometry type: MULTIPOLYGON

Dimension: XY

Bounding box: xmin: 4.767854 ymin: 51.94117 xmax: 5.61974 ymax: 52.28549

Geodetic CRS: WGS 84

name latitude longitude geometry

1 Utrecht 52.0749 5.1938 MULTIPOLYGON (((5.408922 52...Working With Vector Data: Mutate

- New variables can be added:

Simple feature collection with 4 features and 2 fields

Geometry type: MULTIPOLYGON

Dimension: XY

Bounding box: xmin: 5.00448 ymin: 51.73483 xmax: 7.198506 ymax: 53.55809

Geodetic CRS: WGS 84

name area geometry

408 Groningen 2386242755 [m^2] MULTIPOLYGON (((7.194591 53...

410 Drenthe 2626372215 [m^2] MULTIPOLYGON (((7.072151 52...

411 Overijssel 3331334171 [m^2] MULTIPOLYGON (((6.719083 52...

413 Gelderland 5113884777 [m^2] MULTIPOLYGON (((6.771576 52...Spatial Join

- In Economics, you might have two spatial datasets often don’t share a common ID.

- For example, you have house coordinates (points) and school districts (polygons). You want to know which district each house belongs to.

- This requires a Spatial Join.

- Joining a spatial

data.framewith anotherdata.frameusing the_join()functions

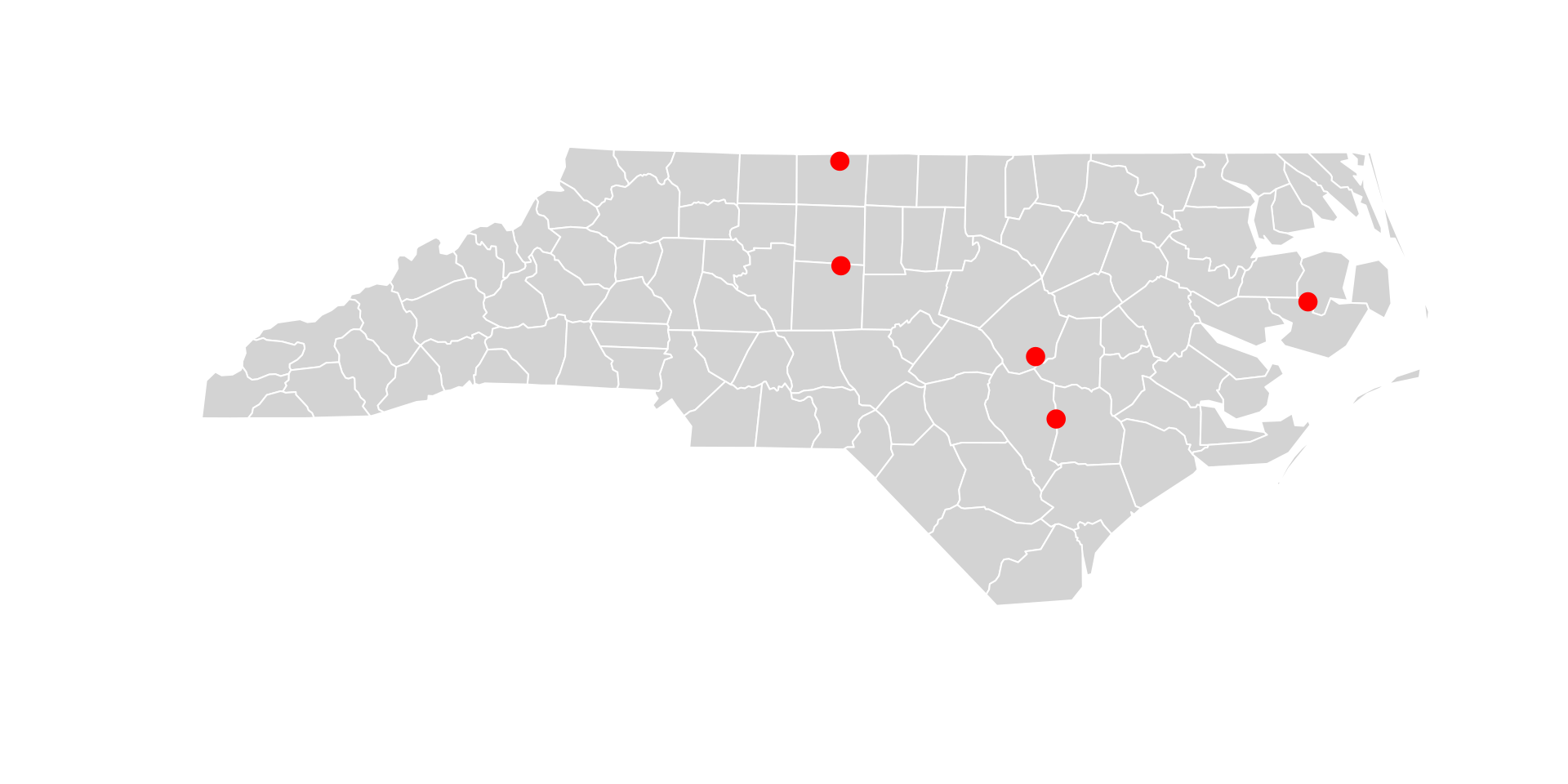

Example: Spatial Join

Example: Spatial Join

Let’s create some random points (simulating firms) and join them to the counties.

Simple feature collection with 5 features and 14 fields

Geometry type: POINT

Dimension: XY

Bounding box: xmin: -79.71374 ymin: 34.98391 xmax: -76.33111 ymax: 36.50538

Geodetic CRS: NAD27

AREA PERIMETER CNTY_ CNTY_ID NAME FIPS FIPSNO CRESS_ID BIR74 SID74

1 0.153 1.616 1839 1839 Rockingham 37157 37157 79 4449 16

2 0.201 1.805 1968 1968 Randolph 37151 37151 76 4456 7

3 0.241 2.214 2083 2083 Sampson 37163 37163 82 3025 4

4 0.207 1.851 1989 1989 Johnston 37101 37101 51 3999 6

5 0.167 2.709 2099 2099 Hyde 37095 37095 48 338 0

NWBIR74 BIR79 SID79 NWBIR79 x

1 1243 5386 5 1369 POINT (-79.71374 36.50538)

2 384 5711 12 483 POINT (-79.70565 35.88825)

3 1396 3447 4 1524 POINT (-78.15076 34.98391)

4 1165 4780 13 1349 POINT (-78.29921 35.3522)

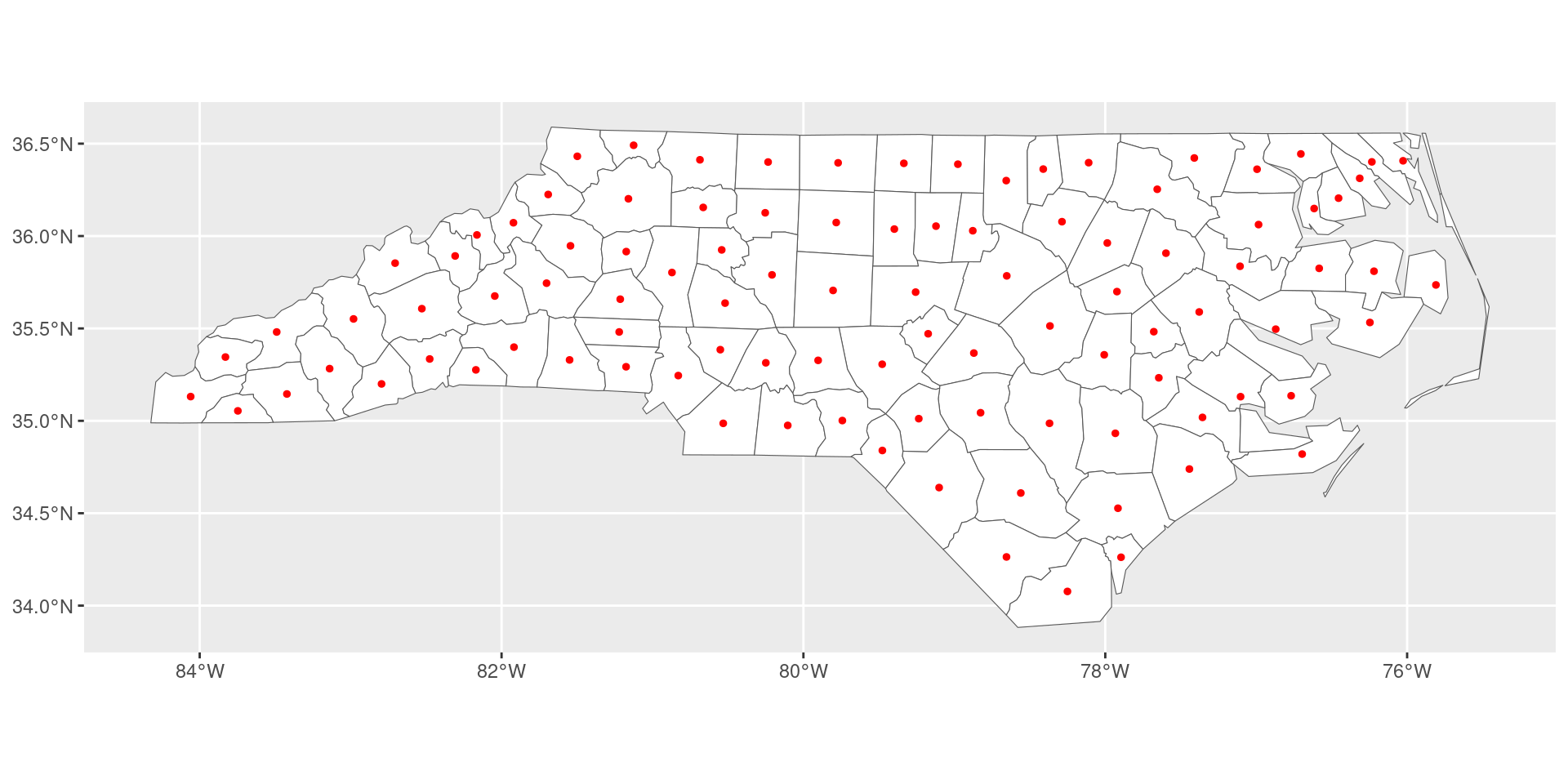

5 134 427 0 169 POINT (-76.33111 35.67557)Centroids

- Sometimes we want to simplify a region to a single point (e.g., to calculate distance between counties).

- A centroid is simply the geometric center of the polygon.

Buffers

- Buffers are used to define an area of influence (e.g., 10km radius around a market).

- This requires a projected CRS (meters), not Lat/Lon (degrees).

Working With Vector Data: Plot

Spatial Topology

- Topology = logical spatial relationships between objects (TRUE/FALSE answers)

- Critical for economics when observations lack shared IDs but share space:

- “Which firms fall inside Enterprise Zones?” (policy evaluation)

- “Which households lie within floodplains?” (risk exposure)

- “Which stores compete within 5km?” (market definition)

- Spatial predicates return logical matrices:

sparse = TRUE(default): returns only intersecting pairs (efficient for large data)sparse = FALSE: returns full TRUE/FALSE matrix (easier for beginners)

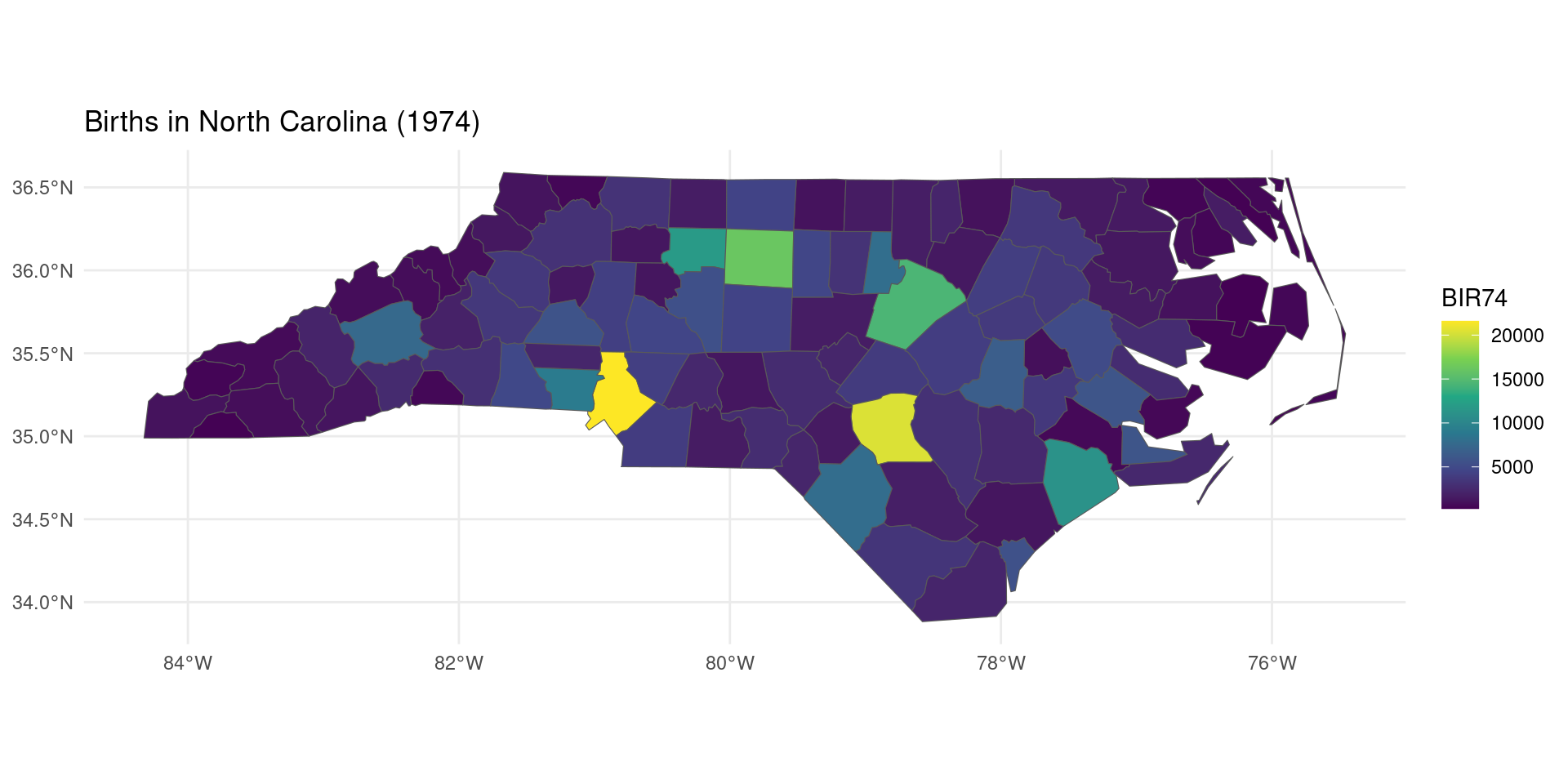

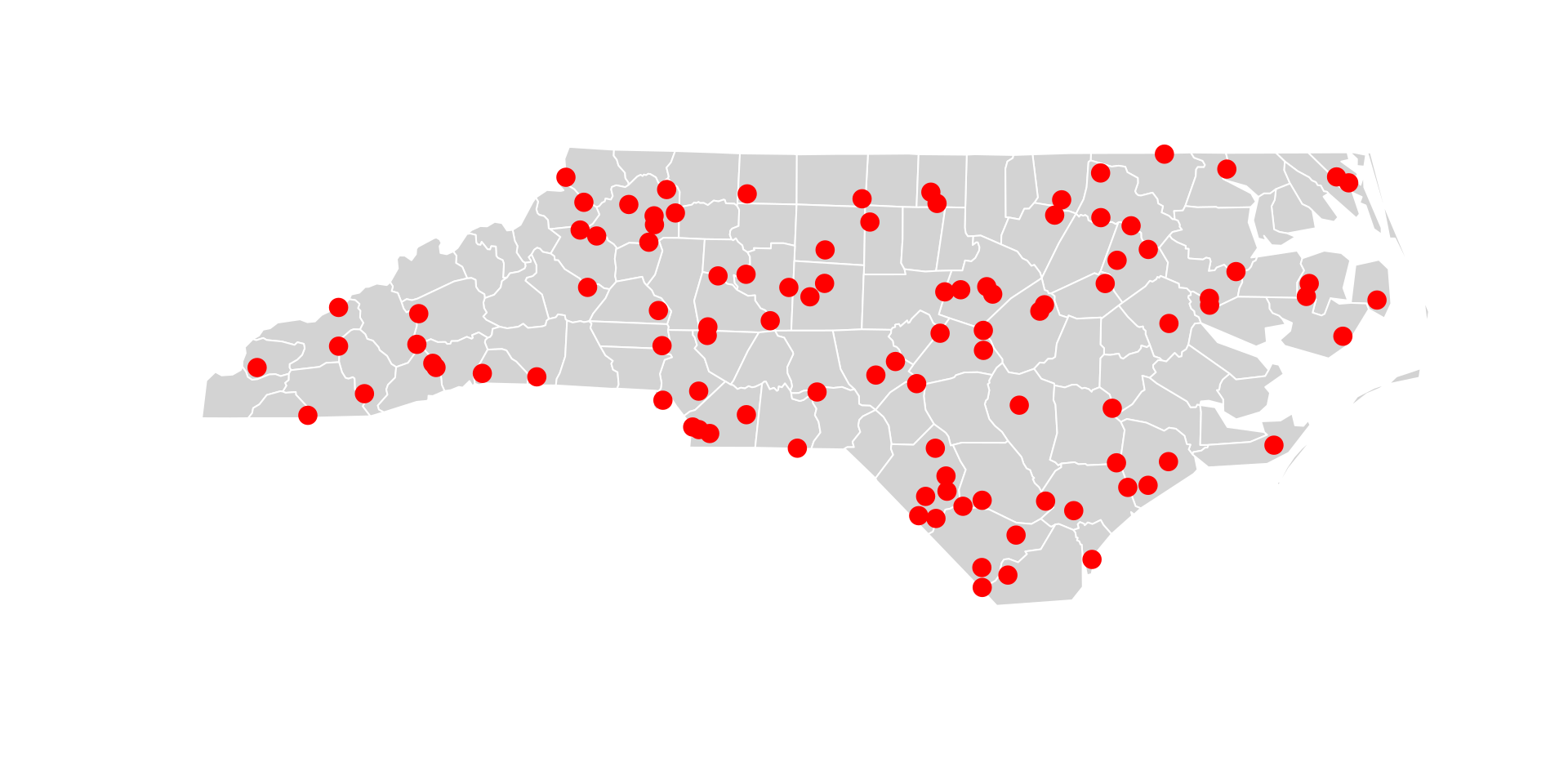

Example: Finding Stores Inside High-Birth Counties

Example: Finding Stores In High-Birth Counties

Let’s simulate the location of several stores over the nc counties and investigate whether the revenue of stores in high-birth counties is higher than the revenue of stores in low-birth counties.

library(sf)

library(dplyr)

# Built-in NC counties (polygons)

nc <- st_read(system.file("shape/nc.shp", package = "sf"), quiet = TRUE)

# Simulate retail stores as county centroids

stores <- st_sample(st_union(nc), size= nrow(nc)) |>

st_as_sf(coords="x") |>

mutate(store_id = 1:nrow(nc), revenue = runif(nrow(nc), 50, 200))

plot(st_geometry(nc), col = "lightgray", border = "white")

plot(stores, add = TRUE, col = "red", pch = 19, cex = 1.5)

Example: Finding Stores Inside High-Income Counties [2]

Example: Finding Stores In High-Birth Counties

The function st_intersects(A, B) returns an \(n \times m\) matrix, where row \(i\) = feature i in A, column \(j\) = feature j in B, and the value = TRUE if they intersect.

# Identify high-birth counties (top quartile of births as proxy)

nc <- nc |> mutate(high_birth = BIR74 > quantile(BIR74, 0.75))

# Which stores fall INSIDE high-birth counties?

intersection_matrix <- st_intersects(

stores,

nc[nc$high_birth, ],

sparse = FALSE

)

# Add result to stores data

stores <- stores |>

mutate(in_hb_area = rowSums(intersection_matrix) > 0)

# Do stores in high-birth counties have more revenue?

stores |>

st_drop_geometry() |>

summarise(

avg_revenue_all = mean(revenue),

avg_revenue_hb = mean(revenue[in_hb_area])

) avg_revenue_all avg_revenue_hb

1 123.9398 130.5875Common Spatial Predicates

| Predicate | Economic Use Case | Example |

|---|---|---|

st_intersects() |

Exposure to zones/policies | “Firms inside tax incentive zones” |

st_within() |

Strict containment | “Households within city limits (not suburbs)” |

st_disjoint() |

Identifying isolation | “Firms with no competitor within 10km” |

st_touches() |

Border effects | “Counties sharing a border with a treatment region” |

st_is_within_distance() |

Distance thresholds | “Schools within 2km of pollution source” |

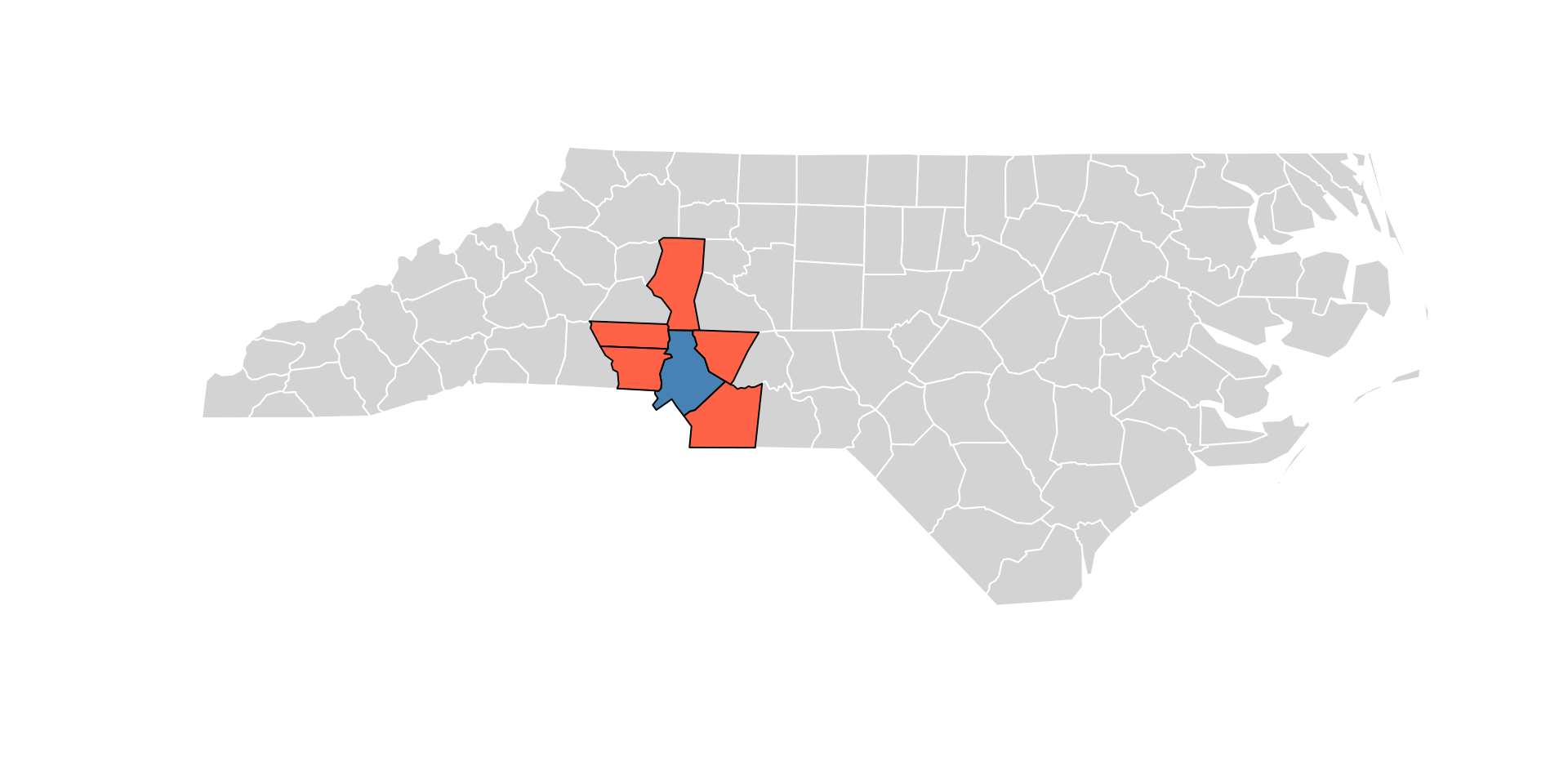

Example: Find Adjacent Counties

Example: Find Counties Touching Highest-Birth County

highest_birth_county <- nc[which.max(nc$BIR74), ]

border_neighbors <- st_touches(highest_birth_county, nc, sparse = FALSE)

# Visualize

plot(st_geometry(nc), col = "lightgray", border = "white")

plot(st_geometry(highest_birth_county), col = "steelblue", add = TRUE)

plot(st_geometry(nc[border_neighbors, ]), col = "tomato", add = TRUE)

Working With Vector Data: Distance

- While the topological relations presented before are binary (true/false), distance relations are continuous.

- The distance between two sf objects is calculated with

st_distance()- But if you put in two spatial data.frames, you’ll get back a distance matrix.

Working With Vector Data: Distance

- Sometimes, you might want to calculate the minimum or maximum distance between each object in a

data.frameand another set of points or polygons - You can do that using the following:

- Take the distance matrix as before.

- For each row, ask what is the minimum or maximum element.

Working With Vector Data: No Data?

- R also has packages that allow us to geocode place names or addresses: the

osmdatapackage can be used to convert names to geographic coordinates.- Use the

getbb()function to “Google” for a place

- Use the

Working With Vector Data: No Data?

- Either get the polygon or the centroid.

- Turn it into a spatial data.frame using

st_as_sf().

Simple feature collection with 1 feature and 0 fields

Geometry type: POINT

Dimension: XY

Bounding box: xmin: 5.082626 ymin: 52.08417 xmax: 5.082626 ymax: 52.08417

Geodetic CRS: WGS 84

geometry

1 POINT (5.082625 52.08417)Raster Data

The stars Package

- stars = spatiotemporal arrays

- Designed for working with data cubes (multi-dimensional arrays)

- Handles both spatial and temporal dimensions

- Integration with the tidyverse and sf packages

Reading Raster Data

- Use

read_stars()to load raster files - Supports many formats: GeoTIFF, NetCDF, and more

stars object with 3 dimensions and 1 attribute

attribute(s):

Min. 1st Qu. Median Mean 3rd Qu. Max.

L7_ETMs.tif 1 54 69 68.91242 86 255

dimension(s):

from to offset delta refsys point x/y

x 1 349 288776 28.5 SIRGAS 2000 / UTM zone 25S FALSE [x]

y 1 352 9120761 -28.5 SIRGAS 2000 / UTM zone 25S FALSE [y]

band 1 6 NA NA NA NA Understanding stars Objects

A stars object contains:

- Attributes: The data values (e.g., reflectance, temperature)

- Dimensions: Information about x, y, time, bands, etc.

from/to: Index rangeoffset: Starting coordinatedelta: Cell size (can be negative for y!)refsys: Coordinate reference system

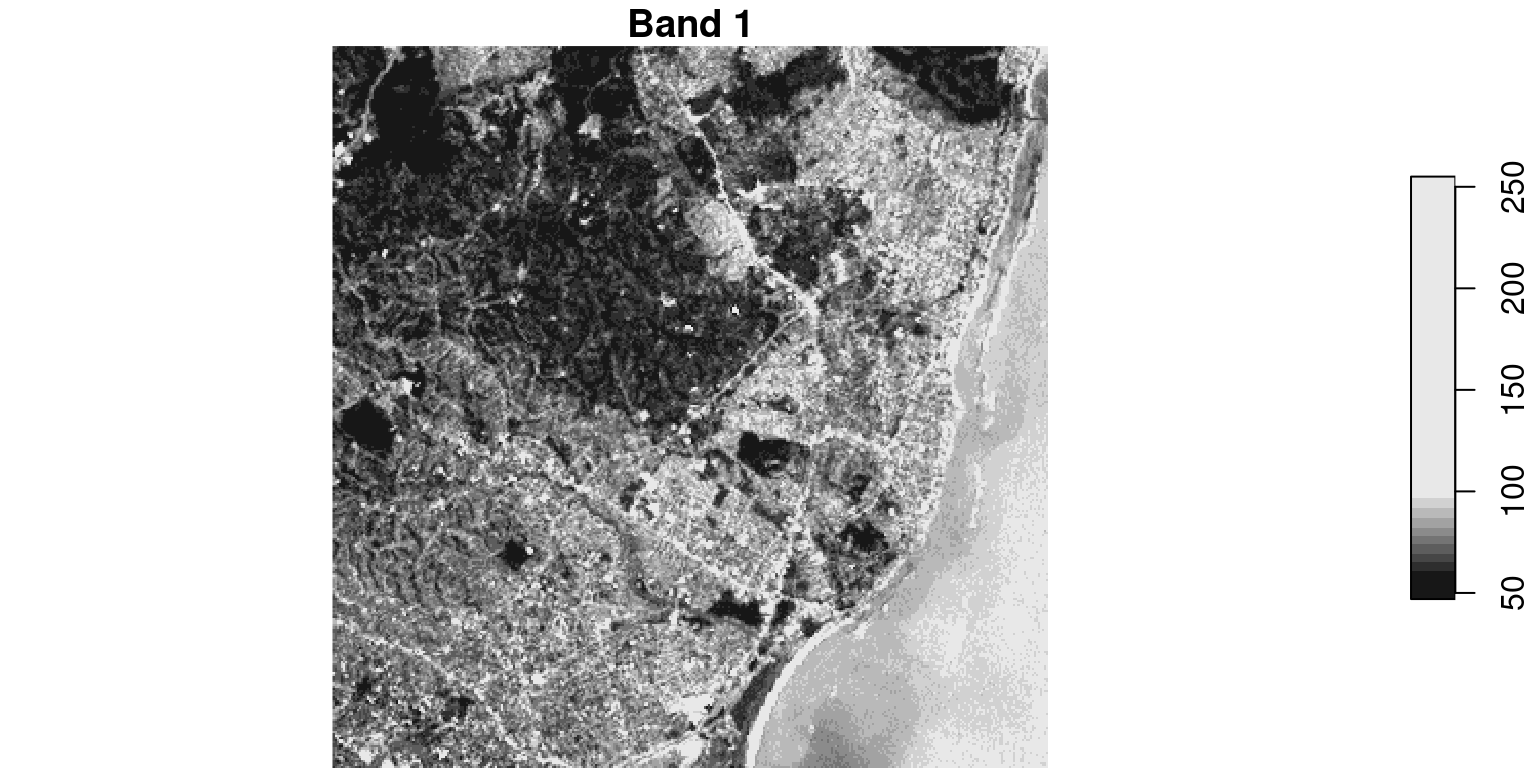

Visualizing Raster Data

- By default, plots all bands/attributes

- Can subset to specific bands or regions

Visualizing Raster Data

- A band is a single “layer” of light that a satellite camera captures.

- Just like your phone camera has sensors for red, green, and blue light to make a color photo, satellites capture many layers — including light our eyes can’t see.

Subsetting Rasters

- There are three main approaches to select a subset of a raster:

stars object with 3 dimensions and 1 attribute

attribute(s):

Min. 1st Qu. Median Mean 3rd Qu. Max.

L7_ETMs.tif 23 44 57 55.85393 65 235

dimension(s):

from to offset delta refsys point x/y

x 1 100 288776 28.5 SIRGAS 2000 / UTM zone 25S FALSE [x]

y 1 100 9120761 -28.5 SIRGAS 2000 / UTM zone 25S FALSE [y]

band 1 3 NA NA NA NA Working with Attributes

- It is also easy to add new attributes on the basis of existing ones, and to split

starsobjects according to attribute.

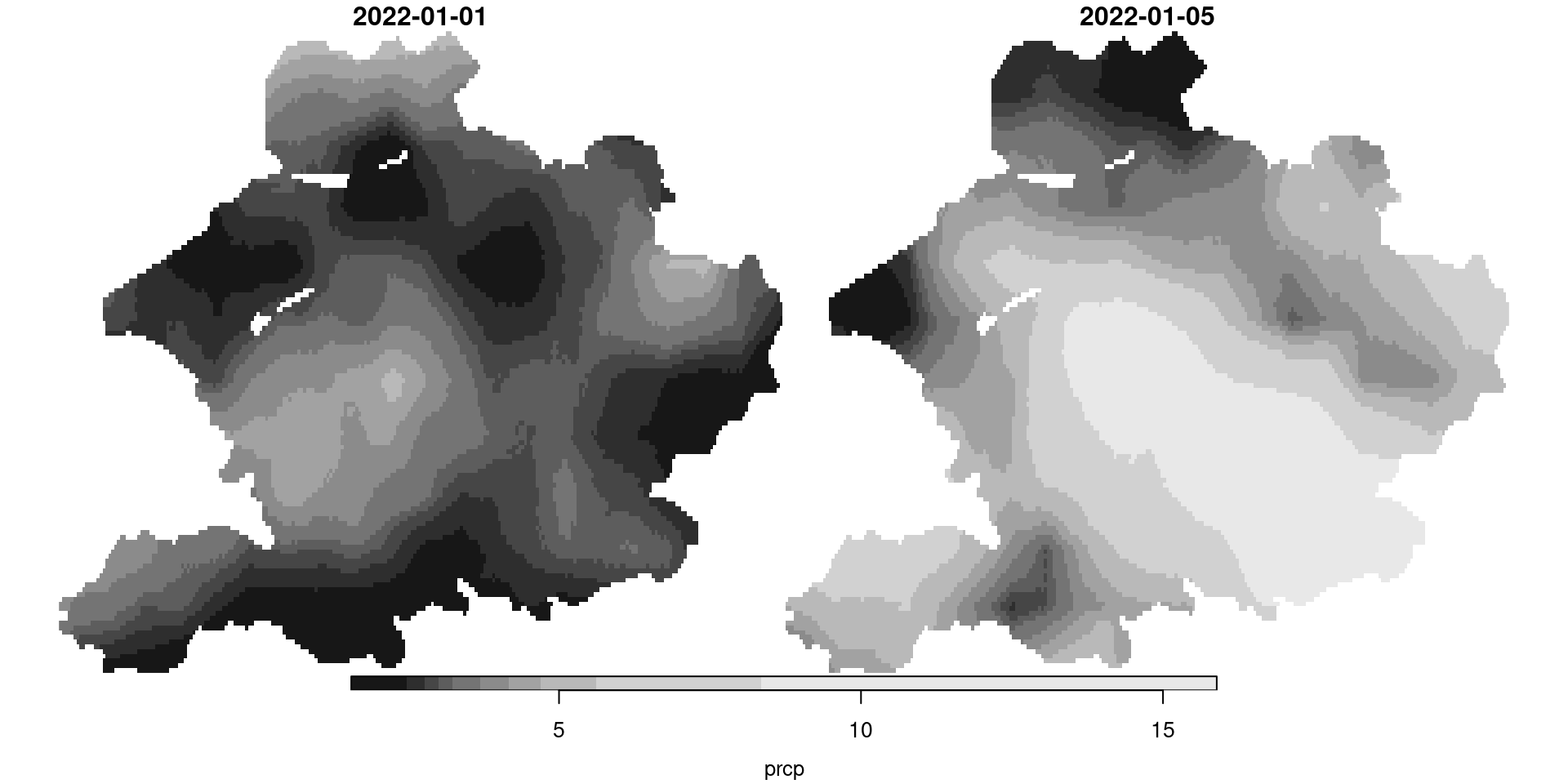

Raster Time Series

starsexcels at handling temporal data- NetCDF files often contain time series

stars object with 3 dimensions and 2 attributes

attribute(s):

Min. 1st Qu. Median Mean 3rd Qu. Max. NA's

pr [mm/m] 0.5900000 56.139999 81.88000 101.26433 121.07250 848.54999 7116

tas [C] -0.4209678 8.898887 15.65763 15.48932 21.77979 29.38581 7116

dimension(s):

from to offset delta refsys values x/y

x 1 81 -85 0.125 NA NULL [x]

y 1 33 37.12 -0.125 NA NULL [y]

time 1 12 NA NA POSIXct 1999-01-31,...,1999-12-31 Extracting Information

[1] "1999-01-31 UTC" "1999-02-28 UTC" "1999-03-31 UTC" "1999-04-30 UTC"

[5] "1999-05-31 UTC" "1999-06-30 UTC" "1999-07-31 UTC" "1999-08-31 UTC"

[9] "1999-09-30 UTC" "1999-10-31 UTC" "1999-11-30 UTC" "1999-12-31 UTC"Coordinate Reference System: NA xmin ymin xmax ymax

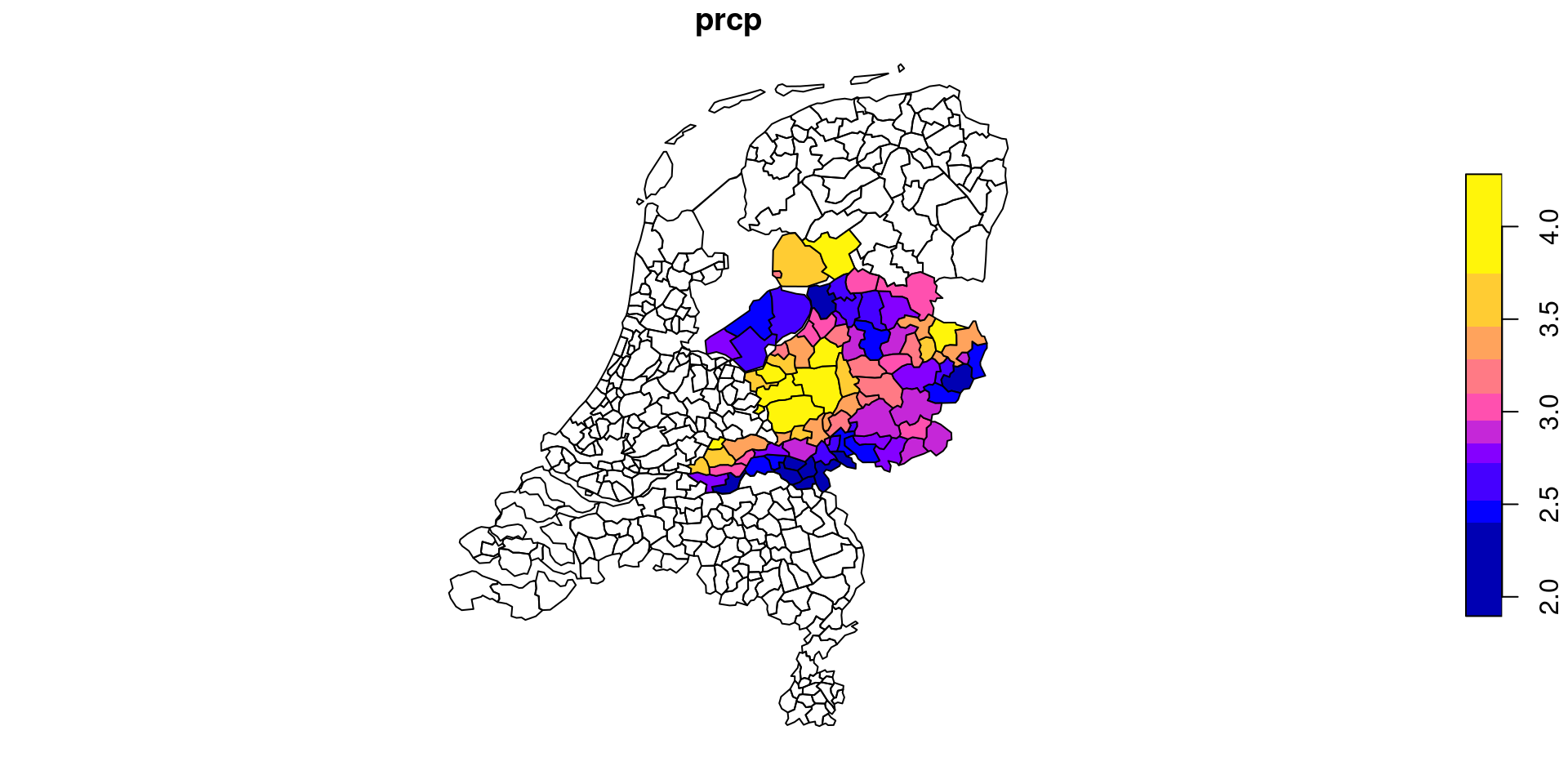

-85.000 33.000 -74.875 37.125 Aggregating Rasters

- Calculate statistics over polygons

- Use

aggregate()with a custom function

R Packages with Vector Data

- Climate & Weather:

geodatafacilitates access to climate, crops, elevation, land use, soil, species occurrence, accessibility, administrative boundaries and other data.rWBclimate- Access World Bank climate data with spatial components, relevant for climate-development nexus research.

- Population:

- The

woprpackage provides access to the WorldPop Open Population Repository and provides estimates of population sizes for specific geographic areas. rnaturalearthprovides vector map data, including country boundaries, states/provinces, populated places, and geographic features.spDatacontains diverse spatial datasets including economic and demographic data. It has vector datasets useful for development economics analysis.

- The

- There is much more out there, see e.g. my website.

R Packages with Raster Data

- Climate & Weather:

easyclimateprovides easy access to daily temperature and precipitation data across Europe. ncdf4 is used for handling NetCDF files, common for European climate models.clcallows for the download and analysis of the CORINE Land Cover (CLC) dataset, which covers European countries.

- Elevation:

elevatrfetches elevation data (DEM) which can be clipped to European extents.

- Environmental Data:

rasterdivis used for calculating landscape diversity indices, often applied in European ecological studies.

- Infrastructure:

- GHSL (Global Human Settlement Layer) provides raster data on human presence in Europe.

Example: Aggregating Rasters

Example: Aggregating Raster over Polygon

library(easyclimate); library(cbsodataR)

# Select a part of the Netherlands

polygons_nl <- cbs_get_sf("nuts1", 2016) |>

st_transform('wgs84') |>

dplyr::filter(statcode=='NL2')

# Get climate data for this part

climate_nl <- get_daily_climate(

polygons_nl,

climatic_var = "Prcp",

period = c("2022-01-01", "2022-01-05"),

output = 'raster'

) |>

st_as_stars()

# Change the name of the value variable to prcp (precipitation)

names(climate_nl) <- 'prcp'

# Select only the precipitation of 5 January

climate_nl[,,,'2022-01-05']stars object with 3 dimensions and 1 attribute

attribute(s):

Min. 1st Qu. Median Mean 3rd Qu. Max. NA's

prcp 1.56 4.04 5.06 5.96972 7.36 15.89 13943

dimension(s):

from to offset delta refsys values x/y

x 1 249 5 0.008333 WGS 84 NULL [x]

y 1 135 52.86 -0.008333 WGS 84 NULL [y]

band 2 2 NA NA NA 2022-01-05

Economic Studies Using Spatial Variation

- Dell (2010): The Persistent Effects of Peru’s Mining Mita

- Uses a spatial regression discontinuity design across the boundary of a forced labor catchment area (the mita) in Peru to show that historical extractive institutions lower household consumption and stunt growth even centuries later.

- Dell and Olken (2020): The Development Effects of the Extractive Colonial Economy

- Exploits spatial variation in the rollout of the Dutch Cultivation System in Java to find that areas near historical sugar factories are wealthier today due to persistent infrastructure and industrialization.

- Bazzi et al. (2020): Frontier Culture: The Roots and Persistence of “Rugged Individualism”

- Tracks the historical moving frontier line across the U.S. to demonstrate that longer exposure to frontier conditions generates a persistent culture of individualism and opposition to redistribution.

- Michalopoulos and Xue (2021): Folklore

- Analyzes the spatial distribution of folklore motifs across roughly 1,000 societies to show how oral traditions reflect and shape persistent cultural values like risk aversion and trust.

Economic Studies Using Spatial Variation

- Montero and Yang (2022): Religious Festivals and Economic Development: Evidence from the Timing of Mexican Saint Day Festivals

- Leverages random spatial and temporal variation in the dates of saint day festivals in Mexico to show that festivals coinciding with planting seasons lower long-run income by crowding out agricultural investment.

- Valencia Caicedo (2019): The Mission: Human Capital Transmission, Economic Persistence, and Culture

- Uses distance to historical Jesuit missions in Argentina, Brazil, and Paraguay to show that areas closer to missions have higher modern literacy and income due to the persistent transmission of human capital and occupational skills.

- Ambrus et al. (2020): Loss in the Time of Cholera: Long-Run Impact of a Disease Epidemic

- Exploits the spatial boundary of the Broad Street water pump catchment area in 1854 London (famous from John Snow’s map) to show that the localized cholera outbreak caused a permanent neighborhood downgrade as the wealthy fled and poverty became entrenched.

Recapitulation

Recapitulation

- Location matters in economics:

- Spatial relationships (proximity, contiguity, distance) often drive economic phenomena—ignoring them risks omitted variable bias and misspecified models.

- Two fundamental data models:

- Vector data (points/lines/polygons) for discrete features—ideal for administrative boundaries, firm locations, and networks.

- Raster data (gridded cells) for continuous surfaces—essential for satellite imagery, climate variables, and density measures.

- Always verify coordinate reference systems before analysis. Geographic CRS (lat/lon) ≠ projected CRS (meters)—mismatched CRS causes silent errors in distances, areas, and joins.

Recapitulation

- Spatial operations enable economic questions:

- Spatial joins link observations without shared IDs (e.g., firms → districts).

- Buffers define zones of influence (e.g., market catchments).

- Topology & distance quantify spatial relationships (e.g., competition intensity).

sf(vector) andstars(raster) enable spatial analysis that’s transparent, scriptable, and publication-ready.- Begin with vector operations (joins, centroids, buffers) before advancing to raster/time-series analysis. Most economic applications live in the vector world.

Lecture 7: Spatial Data